Global first

Write the coordination once as a protocol instead of scattering it across agent prompts and callbacks.

Global protocols for multi-agent LLM systems

Building multi-agent systems is hard because coordination logic ends up scattered across agent prompts, callbacks, and state machines no one fully understands. ZipperGen gives you a single global protocol: who sends what to whom, who runs which LLM, and who owns each decision, including tools, humans, and external services. ZipperGen projects it to each agent automatically and runs it concurrently.

Core projection theorems machine-checked in Lean 4.

Write the coordination once as a protocol instead of scattering it across agent prompts and callbacks.

Each lifeline receives the local program it needs: sends, receives, owned decisions, tool calls, and optional human control points.

If the workflow compiles, the coordination layer is structurally deadlock-free by construction.

Tiny workflow

The annotation @ Editor says that Editor owns the decision. ZipperGen

generates the control communication needed by the other lifelines.

@workflow

def write_tweet(topic: str @ User) -> str:

User(topic) >> Writer(topic)

Writer: tweet = draft(topic)

Writer(tweet) >> Editor(tweet)

Editor: approved = approve(tweet)

if approved @ Editor:

Editor(tweet) >> User(tweet)

else:

Editor(tweet) >> Writer(tweet)

Writer: tweet = revise(tweet)

Writer(tweet) >> User(tweet)

return tweet @ UserLarger example

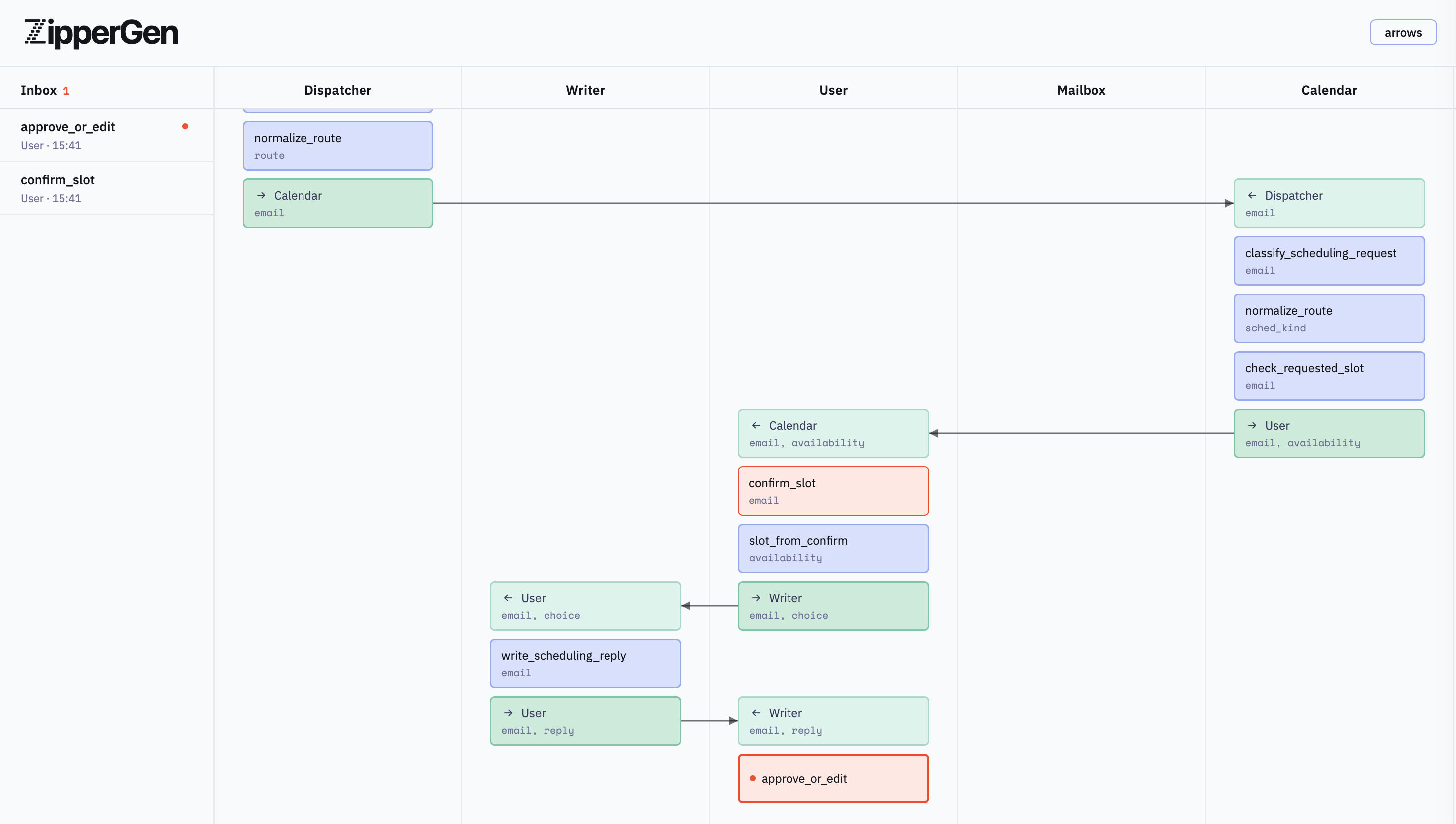

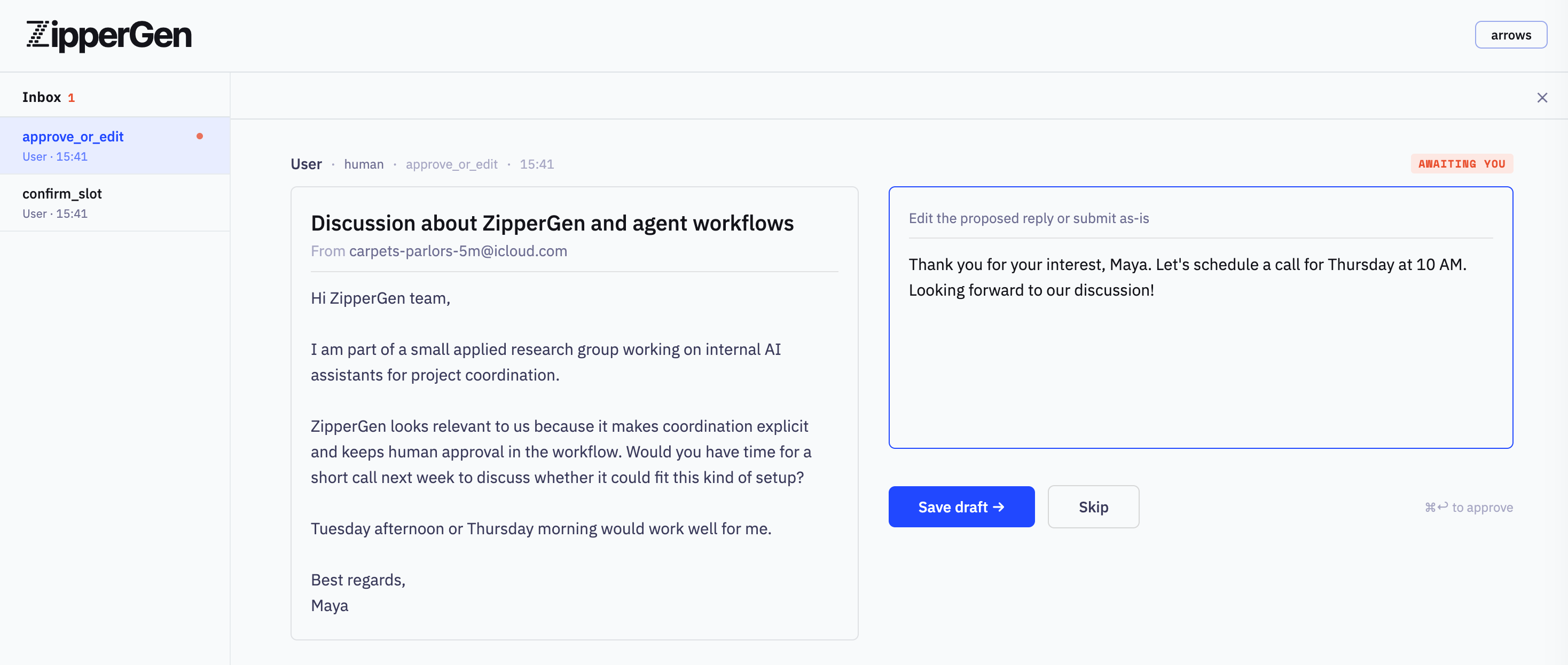

command_center.py runs two independent loops in parallel. One handles

incoming email: Mailbox polls the inbox, Dispatcher classifies

each message, and fragments handle scheduling, cancellations, tasks, and replies.

The other handles Telegram commands from the owner. Calendar,

Writer, and User are shared between both streams.

ZipperGen's projection ensures each receives exactly the messages it needs, from

whichever stream generated them, in the order the protocol requires.

Run it with mock data to see both streams live without any API keys:

python examples/command_center.py --mock@workflow

def command_center():

with parallel:

with branch:

while True @ Mailbox:

if mail_present() @ Mailbox:

Mailbox: email = pop_pending_email()

Mailbox(email) >> Dispatcher(email)

Dispatcher: route = classify_email(email)

Dispatcher: route = normalize_route(route)

if (route == "spam") @ Dispatcher:

...

elif (route == "scheduling") @ Dispatcher:

scheduling_branch(email)

elif (route == "task") @ Dispatcher:

task_branch(email)

reply_branch(email)

else:

reply_branch(email)

else:

Mailbox: _ = wait_briefly()

with branch:

while True @ Chat:

if chat_present() @ Chat:

Chat: chat_msg = pop_pending_chat()

Chat(chat_msg) >> Dispatcher(chat_msg)

Dispatcher: chat_route = classify_chat(chat_msg)

Dispatcher: chat_route = normalize_route(chat_route)

if (chat_route == "schedule_meeting") @ Dispatcher:

schedule_meeting_from_chat(chat_msg)

elif (chat_route == "create_task") @ Dispatcher:

create_task_from_chat(chat_msg)

...

else:

Chat: _ = wait_briefly()When locally received data can be stale, guards can read the latest causally visible state instead of a sequential log. Vector clocks and message-carried views make the guard result depend on the asynchronous communication structure.

latest_device_on = At[Device].on == True

if latest_device_on @ Indicator:

...Parallel regions run several sub-programs concurrently. Shared lifelines interleave their branch-local programs, and branch-specific channels preserve FIFO order where it matters.

with parallel:

with branch:

Orchestrator(candidate) >> TestRunner(candidate)

TestRunner: (test_status,) = run_tests(candidate)

TestRunner(test_status) >> Committer(test_status)

with branch:

Orchestrator(candidate) >> Security(candidate)

Security: (security_status,) = scan_security(candidate)

Security(security_status) >> Committer(security_status)Try it locally

The built-in mock backend returns placeholder model outputs, so examples can be run without API keys. Switch to OpenAI, Mistral, or Claude with one configuration line when you want real model calls. ZipperGen can also use OpenAI-compatible local model servers such as vLLM. The repository includes a small local Qwen/vLLM example.

git clone https://github.com/zippergen-io/zippergen.git

cd zippergen

pip install -e .

python examples/hello.py # two lifelines, one LLM call

python examples/command_center.py --mock # email triage + Telegram commands

python examples/parallel.py # fan-out / fan-in across branchesFormal foundation

The projection from a global protocol to per-agent local programs is syntax-directed, and deadlock-freedom follows by structural induction, with no runtime checking required. The core theorems are in the paper and machine-checked in Lean 4.

Bollig, Függer, Nowak. Provable Coordination for LLM Agents via Message Sequence Charts. arXiv:2604.17612 [cs.PL]

Bollig. Causal Past Logic for Runtime Verification of Distributed LLM Agent Workflows. arXiv:2605.20923 [cs.LO]

Start from the examples, inspect the generated message sequence chart, and decide whether global protocols fit your agent system.